🚀 Bridging the gap between precision manufacturing and Physical AI.

Welcome. I am a Robotics AI Researcher specializing in contact-aware robot learning, imitation learning pipelines, and generative policy architectures. My work focuses on giving robotic systems the physical intuition required to solve complex, high-precision industrial tasks.

Explore my timeline below to see how I connect deep-tech software engineering with real-world hardware deployment.

💼 Experience :

Master Thesis - Contact-Aware Visuomotor Policies for Robust Dexterous Object Manipulation

Company: Prehensio GmbH

Time: Feb. 2026 – Present

Description:

Currently assigned to the spin-out Prehensio GmbH as part of my ongoing research position at Fraunhofer IPA.

Skills:

Imitation Learning, Tactility, Flow Matching Models, PyTorch, Teleoperation, ROS2, Python, Computer Vision

Research Project - Dexterous Imitation Learning for Vision-Action Models

Company: Fraunhofer Institute IPA

Time: July. 2025 – Feb. 2026

Description:

- Developed a teleoperation and imitation learning pipeline using 3D camera and data glove in ROS2. - Trained Diffusion Policies to enable robust grasping capabilities in multi-object scenes. - Created a full MoveIt model for safe and reliable motion execution planning. - Applied the resulting policies to industrial automation scenarios.

Skills:

Imitation Learning, Diffusion Models, PyTorch, Teleoperation, ROS2, Python, Computer Vision

Research Assistant

Company: Fraunhofer Institute IPA

Time: Jan. 2025 – July. 2025

Description:

Focus on robots with dexterous hands for complex industrial processes: - Developed object pose estimation and tracking algorithms using a multi-camera setup - Integrated solutions into ROS2 and optimized performance with TensorRT and C++ - Improved robustness and accelerated existing model by 10x for industrial deployment

Skills:

PyTorch, Segmentation, ROS2, Python, Computer Vision, TensorRT, Docker

Bachelor Thesis: AI and Large Language Models

Company: SCHUNK – Hand in Hand for Tomorrow

Time: Mar. 2024 – Sept. 2024

Description:

Evaluation and implementation of a retrieval-augmented generation (RAG) approach for product data search using generative AI.

Skills:

Python, LangChain, Vector DBs, ChatGPT, PyTorch, Docker, Linux

Software Engineer (Working Student)

Company: IDS Imaging Development Systems GmbH

Time: Sept. 2023 – Mar. 2024

Description:

Worked on IDS lighthouse, a cloud-based AI vision studio: - Integrated an autolabelling service for easier and faster dataset creation. - Dockerised all services for easier development, testing and deployment. - Evaluated and integrated new SOTA detection models

Skills:

Python, Docker, Git, TensorFlow, Computer Vision, Segmentierung

Intern – Computer Vision

Company: IDS Imaging Development Systems GmbH

Time: Mar. 2023 – Sept. 2023

Description:

- Built multi-language examples for using the REST API of the NXT camera - Developed a cloud dashboard to monitor user performance - Trained and evaluated an image detection model for a client project - Created an AI-based service for image labeling and segmentation

Skills:

C/C++, Python, TypeScript, React, PyTorch, Docker, REST, TensorFlow

Test Engineer (Working Student)

Company: Bosch

Time: Mar. 2022 – Feb. 2023

Description:

Worked on engineering, testing, and development of hydraulic systems.

Skills:

Engineering, Testing, R&D

Student Assistant

Company: Heilbronn University – Center for Industrial AI

Time: Jun. 2022 – Dec. 2022

Description:

Assisted in hardware prototyping and embedded projects

Skills:

C, C++, Python, Arduino, Raspberry Pi

🎓 Education :

Master of Science – Autonomous Systems

Institution: University of Stuttgart

Time: Sept. 2024 – Jan. 2027

Description:

- Research project at Fraunhofer IPA on imitation learning of human grasping tasks with a dexterous robotic arm for industrial applications - Benchmark for evaluating SOTA VLMs on logical game related problem-solving challenges - Literature review on Visiomotor Policies and Vision-Language-Action Models for Robotic Manipulation - Literature review on the application of generative AI in large-scale codebases

Skills:

Foundation Models, Computer Vision, Robotics, Deep Learning, Reinforcement Learning, Artificial Intelligence, LLM

Bachelor of Engineering – Mechatronics and Robotics

Institution: Heilbronn University

Time: Nov. 2020 – Aug. 2024

Description:

Bachelor's thesis GPA: 4.0/4.0 Topic: Retrieval-augmented generation (RAG) for product data search with generative AI Seminar: Time series prediction of chaotic double pendulum using neural networks Projects: - Traffic sign recognition with CNN - Chatbot using LSTMs

Skills:

TensorFlow, Machine Learning, Python, Fusion 360, C++, PyTorch, Git, Time Series Analysis, Image Processing, Robotics, MATLAB, CATIA

Agents YouTube Shorts Pipeline

This project automates a complete product-to-video workflow for YouTube Shorts using an AI agent swarm. It orchestrates a multi-step pipeline that pulls Amazon product links from a queue, scrapes and analyzes product details via vision LLMs, generates short-form scripts, and synthesizes visual assets using FLUX.2. The core innovation lies in the automated video generation via ComfyUI (Wan 2.2) and Edge TTS audio fusion, culminating in an automated upload to YouTube via the Google Cloud API.

Features :

- Multi-agent orchestration using CrewAI to manage the sequential workflow from raw link to final upload.

- Automated product scraping and vision-based analysis of product images for context-aware scriptwriting.

- Dynamic image and video generation integrating OpenRouter (FLUX.2) and local ComfyUI API pipelines (Wan 2.2).

- Audio-visual synchronization using Edge TTS, FFmpeg video fusion, and automated YouTube Data API uploading.

Tech Stack :

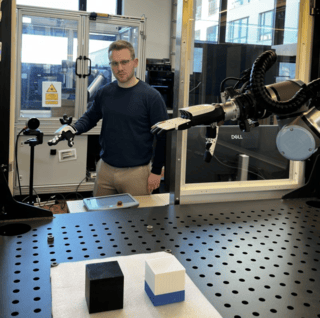

Dexterous Imitation Learning for Visuomotor Policies

I built a complete teleoperation setup to control a robot hand and arm, using this to collect demonstrations and train an adapted 3D Diffusion Policy. My focus was on specific industrial scenarios involving the handling of multiple identical parts. This is normally a nightmare for visuomotor policies because the model cannot distinguish between identical objects based solely on the global features of the vision encoder. Current solutions relying on textual conditioning are insufficient for the industrial context.In addition to conducting experiments on the behaviour of the policy with unknown objects and areas, I also found a method to train the model to indicate when it has finished the task so it can reliably switch back to a classical motion planner.

Features :

- Developed a teleoperation and imitation learning pipeline using 3D camera and data glove in ROS2.

- Trained Diffusion Policies to enable robust grasping capabilities in multi-object scenes.

- Created a full MoveIt model for safe and reliable motion execution planning.

- Applied the resulting policies to industrial automation scenarios.

Tech Stack :

Traffic Sign Recognition with YOLOv8

Developed as part of a university project at Heilbronn University, this real-time traffic sign recognition system uses YOLOv8n for fast and robust detection. It handles challenging conditions like occlusion, poor lighting, and complex backgrounds by leveraging a custom synthetic dataset, multi-stage classification, and real-time frame filtering.

Features :

- Real-time traffic sign detection using YOLOv8n (Nano version for speed and efficiency).

- Custom synthetic dataset generation with COCO backgrounds and heavy augmentation.

- Two-stage classification specifically for speed limit signs.

- Frame caching logic to reduce false positives during inference.

- Visualization via UI overlay: persistent speed sign display + rotating multi-sign view.

- Trained on 3000+ synthetic images and validated with GTSDB and dashcam footage.

- Fast inference: ~0.06–0.09 seconds/frame.

Tech Stack :

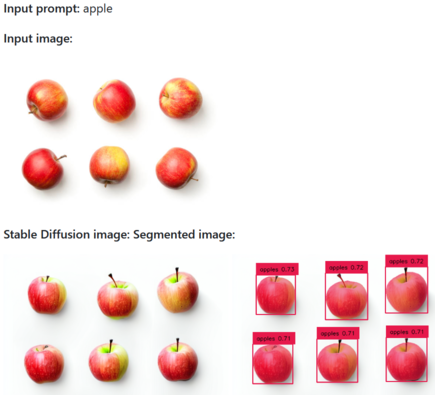

Stabled Grounding SAM

Stabled Grounding SAM is a powerful tool for generating synthetic datasets with pre-segmented images. It combines Stable Diffusion, Grounding DINO, and Segment Anything to create annotated datasets from just a single input image and a label file.

Features :

- Generates synthetic images from a single input image using Stable Diffusion's `img2img`.

- Automatically detects and labels objects using Grounding DINO.

- Refines segmentations using Meta’s Segment Anything model.

- Outputs datasets in YOLO format for easy training integration.

- Great for quickly building vision datasets without manual labeling.

Tech Stack :

Get in Touch! 👋

Whether you want to discuss research collaborations, physical AI deployment, or deep-tech engineering challenges, feel free to reach out.

I am always open to connecting with founders, researchers, and engineers working at the intersection of robotics and machine learning.

Drop me a message, and I will get back to you as soon as possible.

🌐 Connect with me online:

Looking forward to connecting! 📩